|

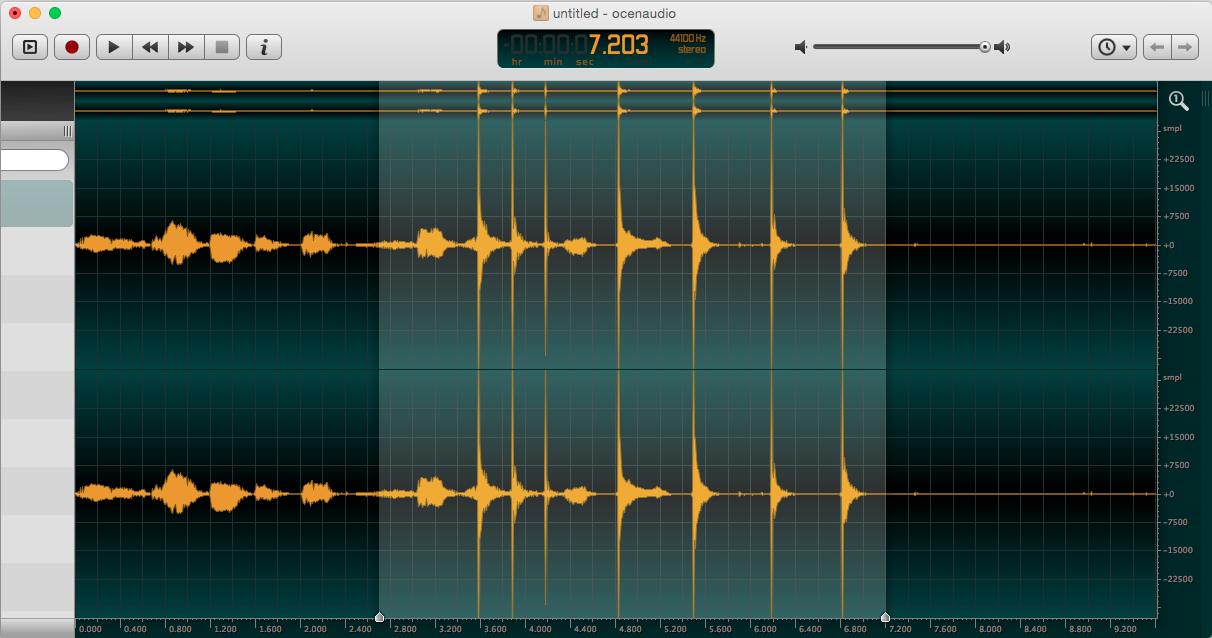

Hence, the Shazam fingerprinting algorithm, as implemented by the client, is fairly simple, as much of the processing is done server-side. The frequency peaks are then sent to the servers, which subsequently look up the strongest peaks in a database, in order look for the simultaneous presence of neighboring peaks both in the associated reference fingerprints and in the fingerprint we sent. Shazam also downsamples the sound at 16 KHz before processing, and cuts the sound in four bands of 250-520 Hz, 520-1450 Hz, 1450-3500 Hz, 3500-5500 Hz (so that if a band is too much scrambled by noise, recognition from other bands may apply). To be put simply, Shazam generates a spectrogram (a time/frequency 2D graph of the sound, with amplitude at intersections) of the sound, and maps out the frequency peaks from it (which should match key points of the harmonics of voice or of certains instruments). How it worksįor useful information about how audio fingerprinting works, you may want to read this article. Generate a lure from a song that, when played, will fool Shazam into thinking that it is the concerned song.Ī (command-line only) Python version, which I made before rewriting in Rust for performance, is also available for demonstration purposes.Ability to recognize songs from your speakers rather than your microphone (on compatible PulseAudio setups).Continuous song detection from the microphone, with the ability to choose your input device.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed